I’ve been working with deep learning algorithms lately. One that I’ve found interesting is coconet by the Magenta group at Google. It was originally made with Tensoflow, but I struggled to make it work with the current versions of that framework. So I worked on a version using PyTorch here. With a few fixes, I was able to make that work.

Coconet is described in this paper. The basic idea is they take lots of Bach 4-part chorales, break them up into 4 measure segments, and use them as input to a deep learning neural network. The key insight of the paper, and there are many, is their decision to drop out some notes from each segment, and reward the network if it figures out how to add notes back in that match those chosen by Bach. In this way, they train the network to restore missing notes. If the chosen notes don’t match Bach’s choices, then that creates a “loss”. Neural Networks work by altering their weights until the loss is as low as it can be. That results in a model that can intelligently re-harmonize a Bach chorale that might be missing many notes, or perhaps missing all the notes.

It took around 30 hours to train the model on my humble HP800 x86 Linux box. Once I had it trained, I could use it to harmonize from an existing chorale, from some random notes, or from nothing at all. The model did it’s best, and many times created a reasonable chorale.

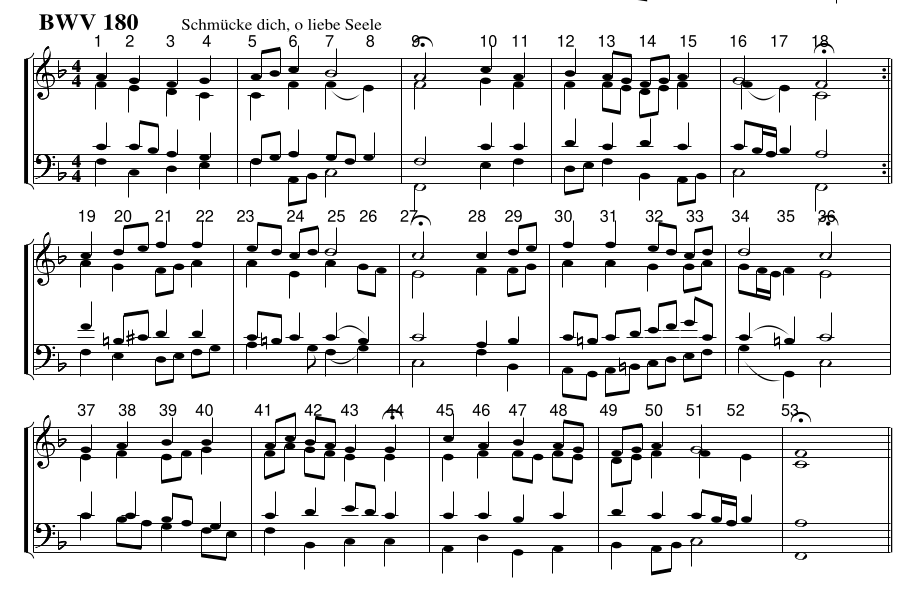

Once I had the model trained, I tried different ways to use it to make music. In the case of today’s performance, the I started with bass line from BWV 180 Schmücke dich, o liebe Seele, pictured above, and threw out the soprano, alto, and tenor lines.

The model only works with two measure segments in 4:4 time, 32 1/16th notes per segment. I had to split my input chorales into similar length segments. This chorale has 2 1/2 measure phrases of 40 1/16th notes. Which were rejected by the model.

I then compressed these into 40 slots down to 32 by compressing the last 16 time slots into 8 slots, resulting in a 32 beat segment. I divided Schmucke into six 32 slot segments, representing the 5 phrases and the one final chord of the original chorale.

I then passed the bass line into the coconet model, and created a four part chorale. I did that four times and ended up with a 16 voice piano part. To match the timing of the Schmucke chorale, I expanded the 32 slot phrases back to 40 slots so the timing would match the original.

I fed segments into the model, which dutifully harmonized them as the model thought Bach might. The result is sort of musical, but not too different from Bach.

Along the way I’ve made many discoveries that I hope to exploit more as I go on. One was that some of the most interesting segments came from the final chord. It’s just a F major held for 32 1/16th note duration. But the model created many interesting variations on it. I hope to be able to use the model to harmonize each quarter note or the original chorale as a separate 32 time slot segment. That’s next on the agenda.

or download here:

Schmucke #1